Asymmetric Multiprocessing on Boundary Devices Nitrogen 7

Asymmetric Multiprocessing on Boundary Devices Nitrogen 7¶

Introduction¶

This Technical Note presents the results of our efforts in regards to asymmetric multiprocessing on the Boundary Devices Nitrogen 7. In particular we have explored the case of reading and displaying data from an Inertial Measurement Unit, running on a heterogeneous asymmetric system. Workload is distributed across the two cores: the MCU (running FreeRTOS) takes samples from an IMU board (using i2c) and periodically sends data to the MPU (Cortex-A7, running Yocto Linux) over a RPMsg channel. Accelerometer, gyroscope and magnetometer data are then logged on the MPU by a user-space application.

Another goal of this demo is to recover from a kernel panic on the MPU side. In the eventuality of a kernel crash, the MCU keeps sampling the IMU and buffers data until the MPU is up again, in order to lose as little data as possible during downtime.

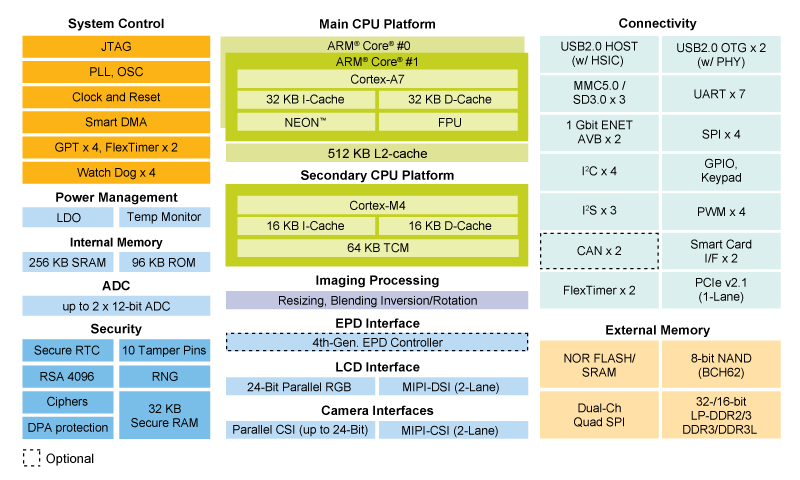

A diagram of the i.MX7D structure is shown below:

In the i.MX7 architecture:

- The Cortex-A7 is the master, meaning that it is responsible for booting the Cortex-M4 core (i.e. starting clocks, writing firmware address information into M4 bootROM, put M4 out of reset). Once started, both CPUs run independently, executing different instructions at different speeds.

- The two different cores communicate between each other. In hardware, this is enabled by the Messaging Unit. In software this is enabled by RPMsg and the OpenAMP framework.

- Both cores access the same peripherals, therefore a mechanism for avoiding conflicts is required. In the i.MX7 architecture, processor isolation is ensured by the Resource Domain Controller.

This demo has two main goals:

- MPU to poll IMU measurements and log data to a text file

- MPU to safely recover from a kernel crash by retrieving data lost during downtime

More detailed information about the Messaging Unit and the RDC components can be found in the i.MX7D Reference Manual (Section 3.2 and 4.3).

The kernel and BSP versions used in this demo are:

- Buildroot 2017.08 featuring Linux Kernel 4.9

- FreeRTOS BSP 1.0.1

The IMU used in this demo is the Adafruit Precision NXP 9-DOF Breakout Board, which features the FXOS8700 3-Axis accelerometer and magnetometer, and the FXAS21002 3-axis gyroscope.

IMU data sampling and logging on the Master Core¶

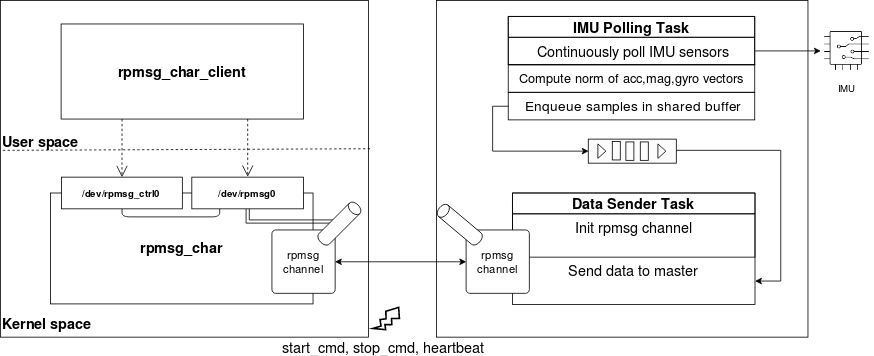

This section describes how we get data out from the IMU (processed by the M4) up to the master core. The following diagram shows an overview of the application’s architecture.

On the right side, the MCU running FreeRTOS is represented. There are two asynchronous tasks:

- IMU Polling Task continuously collects samples from the IMU, elaborate the accelerometer, magnetometer and gyroscope data and puts the results in a buffer queue

- Data Sender Task initializes the RPMsg channel and sends data to the remote endpoint

Instead of sending raw IMU data, the IMU polling task calculates the norm (module) of the accelerometer, magnetometer and gyroscope vectors. The three values are then enqueued in a buffer shared with the data sender task, which dequeues and sends data to the master core when it's ready.

On the left side, the MPU running Yocto OE is represented. The RPMsg character driver is used to export the master RPMsg endpoint as a character device, enabling interaction with the remote device from user-space. Finally, the rpmsg_char_client userspace application interacts with the character devices in order to initialize the endpoint interface device, read incoming data and log it to a text file.

Note: the RPMsg character driver was introduced in Linux kernel version 4.11. For the purpose of this demo, the driver has been backported to Linux kernel version 4.9.

Apart from logging the IMU data, another goal of this demo is to safely handle a kernel panic on the Linux side. In order to achieve this, the MCU must know whether the MPU is correctly running or not. Intercore signalling is used to make the MCU aware of the MPU status and to suspend data flow when not needed. To achieve this, three general purpose interrupts (made available by the i.MX7 Messaging Unit) are triggered by the master core towards the remote core:

- start_cmd is used to signal that the master has created the endpoint interface (i.e. data flow can be started)

- stop_cmd is used to signal that the master has closed the endpoint interface (i.e. data flow can be suspended)

- heartbeat is used to signal that the master is alive (i.e. the system is up and running).

When rpmsg_char_client application is started, the RPMsg endpoint on the master side is created and start_cmd is triggered; when the application is closed, the endpoint is destroyed and stop_cmd is triggered. Heartbeat is periodically triggered at a fixed rate (starting from the moment when RPMsg char driver is probed). The MCU uses a software timer to keep track of the master heartbeat. Each time the heartbeat interrupt is received, the timer is reset. If the timer expires, the remote core assumes that the master core is dead and stops sending data (while still buffering IMU data).

The following state diagrams show the control flow on both sides: the user application flow on the master side and the data sender task flow on the remote side. Note: on the remote side only the state diagram of the data sender task is shown, since the IMU polling task keeps staying in the same "poll-compute norm of vectors-enqueue data in buffer" loop during the remote core lifecycle.

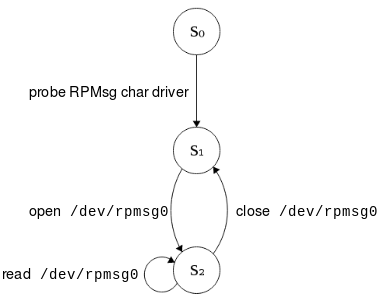

User application control flow¶

- S0: RPMsg channel is down

- S1: RPMsg channel is up, /dev/rpmsg0 is created

- S2: RPMsg channel is up, endpoint created, data is dumped into a log file

On the MPU side, a first transition occurs when the RPMsg char driver is probed. By opening the /dev/rpmsg0 character device, the RPMsg endpoint is created. Then data is read from the /dev/rpmsg0 device and logged to a text file. Finally, the endpoint is destroyed by closing the character device.

Note: in this first diagram the interaction with the driver control interface (i.e. /dev/rpmsg_ctrl0) is not shown for simplicity.

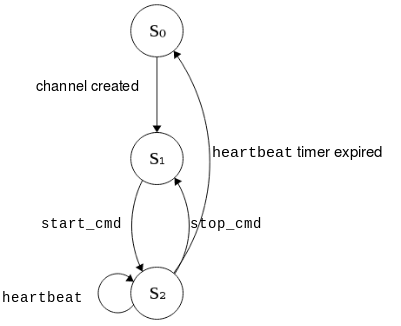

IMU polling task control flow¶

- S0: RPMsg channel is down

- S1: RPMsg channel is up (sampling IMU sensor, buffering data)

- S2: RPMsg channel is up, sending data to the master core, (sampling IMU sensor, buffering data),

On the MCU side, the first transition occurs with the RPMsg channel creation. When the start_cmd interrupt arrives, the data sender task starts sending data to the master core and starts the master heartbeat timer. The timer is reset every time a heartbeat signal arrives from the MPU. If stop_cmd is received, the MCU suspends the data flow towards the master and keeps buffering IMU data. If the heartbeat timer expires, the task destroys the RPMsg channel and waits for the master to be up again before trying to re-initialize it.

Parameters¶

On the IMU, the sample rates are set to 800Hz for the FXAS21002 and 400Hz for the FXOS8700. The MCU polls the IMU sensor board every 10ms and uses a buffer queue with a length of 300 elements to store the norm of the accelerometer, magnetometer and gyroscope vectors. Every 100 ms, 10 items are dequeued from the buffer and sent to the MPU over the RPMsg channel to the master endpoint.

Handling a kernel panic¶

The last step is to figure out how to recover from a Kernel panic on the Linux side. Using a watchdog timer to trigger a full reboot was not an option here. Due to the i.MX7 architecture, the Cortex-M4 would be rebooted as well. The goal is to leave the Cortex-M4 to run the application and restart only the Cortex-A7.

The solution adopted for this demo is Kexec. Kexec enables booting a different kernel with respect to the currently running one. It is frequently used in conjunction with the Kdump mechanism, to both reboot into a new kernel and dump the kernel memory in case of a crash for further debugging. Kexec acts like a soft reboot, it does not reinitialize all hardware peripherals. Therefore in this case, rebooting with kexec doesn’t affect the behavior of the M4 core which can continue running. The fact that a full reboot is bypassed may leave devices in an unknown state. In this demo, all critical devices (e.g. the IMU) are assigned and handled by the M4 core, therefore they are not reset in the soft-reboot phase.

In this demo, the zImage that is loaded post-crash is different from the one that was running pre-crash. Therefore, two kernel images are involved:

- a main kernel which is normally executed during the first boot

- a crash kernel which is booted exclusively when a kernel panic occurs

The second kernel image is a lighter version with respect to the first one, in order to boot as fast as possible as an emergency kernel.

The following command is used to load the crash kernel image into a reserved section of memory and execute it in case of a kernel panic:

kexec -d -p ../crash_zImage --command-line="console=ttymxc0,115200 root=/dev/mmcblk0p1 reset_devices irqpoll maxcpus=1"

irqpoll(appended to the crash kernel cmdline): with this option enabled, when an interrupt is not handled, the kernel searches all known interrupt handlers for itnosmp(appended to the crash kernel cmdline): booting of the crash kernel has been limited to one out of the two A7 cores, as both cores are not needed in the demo use casereset_devices(appended to the crash kernel cmdline): forces drivers to reset the underlying device during initialization. Only some drivers support this option--load-panicoption: indicates that the crash kernel has to be loaded to a reserved memory area and executed as soon as a kernel panic occurs

Additionally the crashkernel=128M option is appended to the main kernel cmdline, to indicate the amount of memory reserved for the crash kernel image.

Note: Kexec/Kdump support on ARM platforms is still experimental.

Pitfalls and open issues¶

Here are some pitfalls that have been encountered, as well as some problems that still need to be solved which requires some further investigation.

-

When the remote core is ready to establish an RPMsg channel, it notifies the master core through a virtqueue kick function call. If this call arrives too early during the crash kernel booting, the system might hang. To overcome this problem, a delay has been added between the moment the remote core realizes that the master core is down and the moment when it tries to bring up the RPMsg channel again.

-

Sometimes kexec hangs and does not complete the soft-reboot process. This happens more frequently when trying to continuously send data from remote to master, instead of sending a burst of data in a predefined interval. This might be due either to the many incoming interrupts or to the experimental support of Kexec on ARM; further investigation has to be done to find the root of this problem.

Video of the demo¶

The video shows the demo in action. The left part shows the prompt of Linux running on the Cortex-A7 (i.e. the master core), while the right part shows the output of the FreeRTOS application running on the Cortex-M4 (i.e. the remote core).

First of all, the user logs in on the Linux shell and launches the user-space application rpmsg_char_client. The remote core acknowledges that the master core is ready and starts sending data through the RPMsg channel. On the master side, data is read and logged on the samples.txt text file.

Then, the crash kernel image is loaded in the reserved portion of memory with kexec.

A kernel panic is then induced with:

# panic.sh script content

echo c > /proc/sysrq-trigger

As soon as the master core crashes, the remote core acknowledges it, destroys the RPMsg channel and starts buffering IMU data without sending.

The crash kernel is immediately loaded on the Linux side. When the master core is up again and the user-space application is re-launched, the RPMsg channel is reinitialized and the remote core starts sending the previously buffered data to the master core. All data is recovered by the master and logged in the samples.txt file.